The world of indirect taxes is rapidly changing. Advancing technology and increasing dependence on ERP systems for indirect tax processes require new skills from indirect tax professionals in order to meet the increasing focus of external stakeholders on taxation and tax risks. With help of data analytics, complex ERP landscape and indirect tax processes can be unraveled. Anomalies in data can be signaled in good time and efficiently followed up, resulting in increased control of the end-to-end indirect tax process. Moreover, increased control data analysis can unlock additional tax value within the data that can serve as a basis for business improvements in indirect tax processes and tax supply chains.

Introduction

Data analytics is not new. Data analytics methodologies have been evolving since the first type of data analytics activities – known as “Analytics 1.0” – appeared in the mid-1950s ([Dave13]). Since then, the application of data analytics has expanded considerably, and supporting tools have become more and more sophisticated. Particularly in the finance, logistics and scientific area, data analytics has grown rapidly since the typical skillset of people working in those areas (e.g., an analytical mindset) has incorporated the required capability to work with large volumes of different sets of data. In the world of tax, however, the application of data analytics as part of day-to-day tax advisory work is relatively new. It only started approximately 5 years ago with straightforward analysis to check VAT return filings with regard to completeness. MS Excel is still the most commonly used technology with an analytical character that tax people apply in their regular work. One of the reasons why the application of data analytics in the area of tax has grown rapidly the last 2 years is the tighter collaboration between tax people and people with an analytical background. The combination of both worlds (tax and IT) is a critical factor in the successful application of data analytics in the area of tax.

In this article we start by giving a short introduction outlining the typical characteristics of transactional taxes. Then we elaborate on the way large organizations manage, via their ERP systems, their tax application for large volumes of data, with the aim of preventing errors. Data analytics / detective monitoring plays an important role in managing indirect tax compliance – which is elucidated in the next section. Then we explain that analysis of large volumes of transactional data can reveal other non-tax insights, all of which come from the same data source. As a case study, we explain how KPMG’s global VAT data analytics solution – Tax Intelligence Solution (TIS) – is a good facilitator for managing tax compliance and opportunities via data analytics. We conclude this article with our vision on how data analysis can contribute to the application of “Horizontal Monitoring”, a concept that has been adopted by many Dutch organizations in accordance with the tax authorities in the Netherlands.

Transactional taxes in a nutshell

To enterprises, there is a big difference between managing direct taxes such as corporate income tax, and indirect taxes such as VAT/GST, customs duties and excise duties. The biggest difference is that, for indirect taxes, every single transaction (both sales and purchases) and many activities (such as transportation) require an assessment; these activities have their own individual tax treatment.

For corporate income tax, it is basically irrelevant where the customer is resident, which product he is buying, where the product is shipped from, and which incoterm was agreed upon. For indirect taxes, these elements are crucial to the question as to whether or not the transaction is subject to tax and, if so, at which rate and sometimes under which jurisdiction, etcetera.

Another big difference between direct and indirect taxes is the fact that, in most countries, the indirect tax returns are not or almost never audited by the tax authorities when submitted by the taxpayer. Only after an audit has been executed, which may take place years later, the taxpayer will have certainty about the tax returns he has submitted.

It is a simple conclusion that many transactions make it hard for (large) companies to manage their indirect tax processes. It becomes even more difficult for these companies if they are registered for indirect tax in different countries, in combination with a complex supply chain.

Example

A mid-sized Dutch company purchases goods from inside and outside the EU. Its customers are resident in many EU countries. To be able to supply these customers, the Dutch company has warehouses with stock in five countries outside the Netherlands. Under the assumption that there are no permanent establishments in the other countries, the Dutch company should submit one corporate income tax return, many months after the fiscal year has ended.

On the other hand, it is realistic to state that the company should submit at least 24 VAT returns, 24 EC sales lists and 72 Intrastat returns in 6 countries. The number of import declarations depends on the facts, but will be at least 12 (and can add up to hundreds). On top of this, the company has just one or a few weeks on average before these returns and declarations must be submitted.

Apart from the number of returns and declarations, every single transaction has its own local tax treatment; the rates differ (from 0% to 27%), and in some countries the reverse charge rule is applicable.

Automation is necessary to be able to manage this complex process.

Tax processing in ERP systems (end-to-end process)

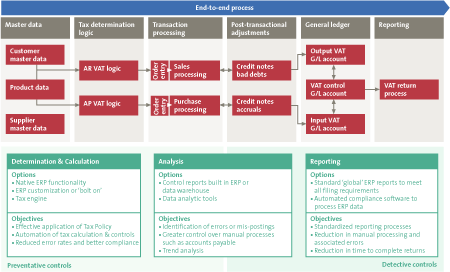

As a typical transaction-driven tax, VAT may be applicable on every single incoming or outgoing invoice. And not only invoices but also accruals, journals or other kinds of financial postings may be relevant from an indirect tax perspective. Present-day ERP systems support local and global businesses in their administration of logistic, production and financial processes and – more importantly – ensure that these processes are mutually connected in order to facilitate end-to-end business processes. From a VAT perspective, the process in the ERP system ends with a VAT returns report (see Figure 1).

In most cases, it is simply a matter of running a standard report that summarizes the totals (baseline amount and VAT amount) per configured and actually used tax code.

Running a standard VAT returns report in an ERP system is a financial activity for which the tax code forms the basis. Besides the tax code, there is a limited amount of further information available to provide detailed information on the underlying transaction that was the initial trigger for the invoice. In order to assess the accuracy of the accounted tax code, more information – from different process angles – is needed to make an appropriate analysis. Hence, the VAT reporting activity typically does not belong to an area where sophisticated detective (data analysis) checks can be performed.

Before we further zoom in on the need for data analysis in the Accounts Payable (AP) and Accounts Receivable (AR) areas, it is important to explain the different natures of tax code determination between AP and AR.

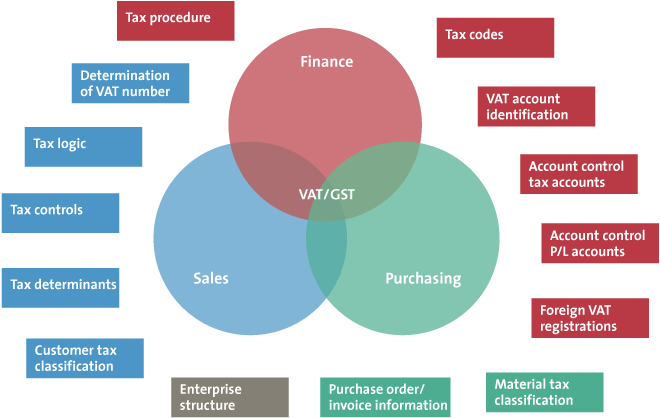

Figure 1. End-to-end VAT processes in ERP systems. [Click on the image for a larger image]

In the AR area, where invoices are typically created via sales orders and outbound deliveries, the most common ERP systems (such as SAP, Oracle) have functionality (“VAT logic”) in place to automatically derive tax codes for each outgoing sales invoice item. This should be an automated control for which companies have to enrich out-of-the box standard VAT logic with business specific VAT intelligence. There is no single ERP system on the market that automatically supports out-of-the-box VAT determination across all kinds of industries. Therefore, as we discussed in an earlier publication ([Bigg08]), it is of vital importance that VAT experts and IT experts collaborate closely during the design and implementation of the VAT logic in the ERP system. Once implemented and tested successfully, a solid governance process around the VAT process should ensure that internal (business) and external (law) changes are picked up and processed in a controlled way. Next to the implemented VAT logic – the core ERP tax determination – sufficient VAT application controls should also be implemented to make sure that the VAT logic is the single source of VAT determination. A good example of a VAT application control is the ERP configuration setting that stipulates that ERP-determined tax codes cannot be overridden manually. If such controls are missing, or cannot be relied upon due to weak IT general controls (e.g. IT change management process), the VAT logic, which is system-driven, cannot ensure that the tax code decision is made by the ERP system in all cases.

In the AP area – generally speaking – no automated tax code determination is in place as part of out-of-the-box ERP implementations. Suppliers who issue invoices to companies are (manually or automatically) processed within the AP department where AP clerks have to read the invoice and the tax consequences mentioned on the invoice in order to manually pick out an appropriated tax code that matches the tax application on the actual invoice. One may understand that it requires basic knowledge of the different country-specific VAT systems and jurisdictions in order to identify the correct tax cod e. Furthermore, the VAT rules and percentages change from time to time, which means that AP clerks need to be trained on the latest VAT derivation rules on a regular basis. What we often see in the market for these kinds of manual processes, is that VAT departments design VAT derivation manuals that include VAT decision trees. These decision trees ultimately lead to a tax code that AP clerks need to select from the overall list of available tax codes. One can imagine that this is an error-sensitive activity, since the number of tax codes from which the AP clerk can choose easily exceeds 100 or sometimes even 500. In addition to this, companies that have implemented financial shared service centers (SSC) have defined hard KPIs partly by the total number of invoices that employees need to process on a daily basis. As the work has a strongly repetitive character, a wrong tax code is easily picked. Furthermore, few preventative (system-based) controls are in place to prevent mistakes. All these typical characteristics of the AP process at large organizations give rise to the need for detective VAT quality controls, such as data analytics.

All these transactional processes and processed data rely heavily on the timely availability and accuracy of master data. Generally speaking, the key master data components that are mostly relevant from a VAT perspective are suppliers, customers and products. Accurate and complete VAT-relevant information needs to be captured for each of those master data components. A basic example of a critical VAT-relevant customer master data field is the country where the customer resides. The existing output VAT logic that drives the tax-code decisions uses the country where the customer is established in almost all cases. It may be evident, but in cases where the customer country in the master data is not a reliable field, how can the accuracy of the tax code decision be guaranteed? The answer is simple: it cannot be guaranteed. Almost all organizations that have implemented ERP systems and are exposed to 100,000+ elements of master data face the challenge to have a solid master data process in place containing sufficient checkpoints to ensure that master data are stored in such a way that they reflect the actual situation.

Another aspect – next to correct master data – that needs to be taken into account in order to rely on the automated VAT determination is to ensure that the actual master data (and thus actual transaction) are always used to calculate the tax code. In other words, how do we know that the tax code decision is based on the correct business flow parameters?

An example: an employee enters a sales order, delivers the goods and creates the invoice. Suppose that the real transaction is a delivery from the Netherlands (country of the warehouse) to Germany (country of the delivery address). Ideally, the ERP system should pick out both countries from the master data in the system. However, if the VAT logic is configured in such a way that the country to which the goods are shipped is derived from the country to which the invoice is sent, a wrong goods flow may be used as the underlying information to calculate the tax code. Another example of incorrect VAT data being used to pick out the tax code is when employees have the possibility to manually enter a (delivery or administrative) country that will be picked out by the VAT logic to derive a tax code. In this case, the actual transaction data (from the master data) are ignored by the VAT calculation mechanism, with a potential risk of inaccurate tax codes.

What makes the end-to-end process even more complex is that the VAT-relevant information that should feed the VAT decisions is widely distributed across different modules in the ERP system.

Figure 2. VAT is scattered through different areas in the ERP system.

Accurate VAT decisions can only be made by a combination of master data, logistics, procurement and financial information. Besides challenges to automate VAT decisions, it is not easy to perform retrospective analysis to test the effectiveness of the VAT controls, VAT logic and manual VAT decisions. Further on in this article, we explain how these challenges can be overcome by applying what we call ‘sophisticated VAT cubing techniques’ to extracted transactional and master data.

VAT monitoring through data analytics

Tax authorities, supervisors, financial investors and other stakeholders are increasingly focused on taxation and tax risks, and expect companies to be in control of the main tax risks. For a company to be in control of its main tax risks means that it must be aware of the main risks it is running. A Tax Control Framework (TCF) – a fiscal risk-and-controls monitoring mechanism that is part of the Business Control Framework – consists of policies/guidelines, a clear overview of tax responsibilities and accountabilities, internal tax procedures/processes and controls (both hard and soft controls), among other things. A proper implementation of a TCF within a company’s daily tax processing system can reduce fiscal errors, spot fiscal opportunities in good time, and increase the quality of correct fiscal returns.

Part of an implementation of a fiscal risk-and-controls monitoring mechanism such as a TCF contains a periodic assessment of the quality of the monitoring mechanism. Whereas assessments were previously undertaken manually and relied on subjective opinions by fiscal expert testing – focusing on the existence of documentation, processes and testing manual controls – we now see that the internal and external stakeholders’ assessment focus is shifting to an increasingly automated approach consisting of the testing of IT-dependent/IT-application controls and (substantial) transactional data testing using advanced tax data analytics. This shift is mainly due to increasingly complex tax supply chains and increasing reliance on ERP-sourced information for tax processing.

Concretizing to VAT – although this also holds true for other transactional taxes – application controls are in place to ensure that automatic VAT derivation supported by sophisticated logic implemented in the ERP system on sales/purchase invoices cannot be disrupted. Examples of VAT application controls are:

- Every sales invoice contains a tax code.

- Tax codes on sales invoices cannot be manually overridden.

- Only authorized users are allowed to create/change tax codes and tax parameters in the ERP system.

The effectiveness of application and authorization controls – combined with effective IT General Controls – only gives partial assurance of the correctness and completeness of input and output VAT activity with regard to transactional data. An efficient and effective way of achieving this objective is to make use of sophisticated VAT data analytics. These targeted analytics test the transactional data against VAT/legal requirements, and can be used to assess transactional data on risk areas (e.g., focusing on underpayment of output VAT, claiming too much input VAT), opportunity areas (e.g., focusing on overpayment of output VAT, input VAT recovery, input VAT accruals) and VAT working capital benefits.

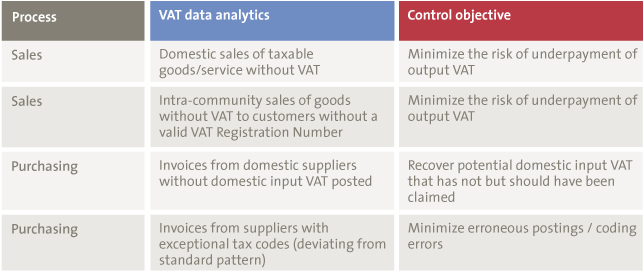

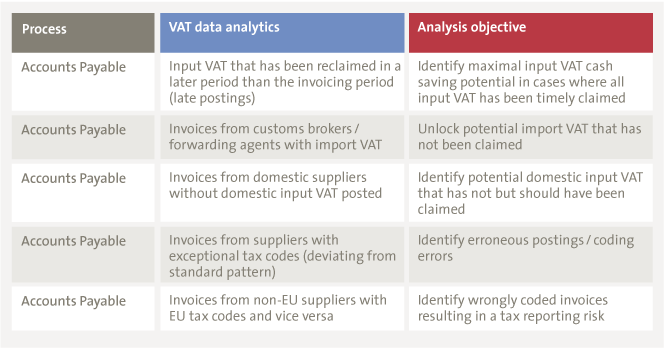

Table 1. Examples of VAT data analytics (including an example control objective).

An initial assessment of the outcomes of the analytics results in potential false positives (transactions that are incorrectly selected as output from the analytics and do not add up to the VAT risk/opportunity) that need to be removed. Once the VAT data analytics have been optimized for purpose, they can be considered as efficient and effective assessment instruments.

One of the current challenges tax managers face is to define the right mixture of manual controls, VAT (application) controls and VAT data analytics. Reporting requirements and increased control from tax authorities, lack of time/resources, increasingly complex and globalized supply chains in combination with increasing IT dependence are factors that enforce a company’s need of a VAT-monitoring mechanism.

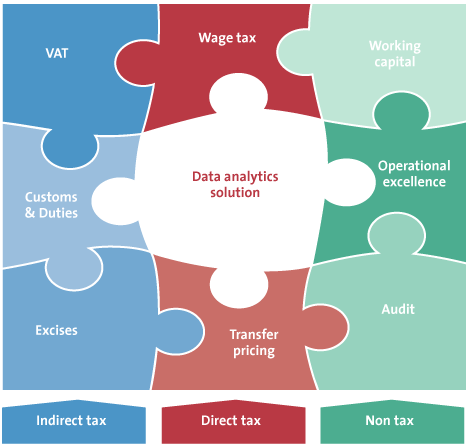

Other tax and non-tax value from data analytics

We mentioned that VAT-relevant information is widely distributed throughout different areas of ERP systems, and that all incoming and outgoing invoices are – in some way – relevant from a VAT perspective. When data analytics is applied to analyze the quality of the VAT process, the same data contain much more insights than the VAT process when you only look at it from an indirect tax perspective. One can think of other taxes that have a strong transactional character, such as transfer pricing or customs duties and excise. Of course, in order to analyze data from the perspective of these kinds of taxes, you need to enrich the transactional data with additional information that is needed to perform checks that make sense. TARIC code information or insurance costs per transaction form typical supplementary information from a customs and duties perspective. When the VAT cubes are enriched with this information, a (tax) data analyst can perform analytical tests on the same data for different types of taxes. This avoids the need for multiple data extracts and multiple tools that use different data sources for the data extracts.

Figure 3. A solution covering indirect tax, direct tax and non-tax analytics.

From a transfer-pricing perspective, the VAT transactional data cubes can be used to analyze intercompany margins/deviations per product (groups), goods flows and periods and suchlike. From practical experience, we see that interesting insights can be unlocked from these kinds of analyses. This transforms the traditional style of intercompany pricing analysis, which is a typical Excel-based exercise in most cases, to a real data-driven approach where dozens of transactional data flows form the basis of the analysis. Furthermore, the data do not lie. They form the source of financial reporting and are an important starting point for intercompany transfer-pricing analysis.

Besides taxes, it is also possible to look at the same data set from a non-tax angle. One can think of procurement, audit, working capital or general process improvement / operational excellence.

Example analytics in the non-tax area are:

- Possible duplicate invoices

- Low-value AP invoices

- Segregation of duties

- Invoices without a purchase order

- Early payments to suppliers

The strength of the combination of tax and non-tax analytics on the same data set lies in the fact that different departments in the same organization can leverage from a single investment in the area of data analytics solutions. This increases the chance of adoption of a(n) – initially intended – VAT data analytics tool. For IT departments that are a key enabler when it comes to an actual implementation of a VAT data analytics tool, it is easier to support and invest in new technologies because departments other than only the tax departments will benefit from the data analytics solution.

Case study: “Tax Intelligence Solution”

KPMG and Meijburg & Co have developed an indirect tax data analytics suite called “Tax Intelligence Solution (TIS)”, enabling clients as well as KPMG indirect tax professionals to:

- Obtain fact-based insights to identify regularities.

- Highlight opportunities to improve the efficiency, effectiveness and harmonization in business operations and financial processes.

- Identify both tax and non-tax value adds such as missed KPIs for a business-outsourcing provider.

- Accelerate input tax credits and improve working capital.

- Optimize supply chain from a tax perspective in order to eliminate refund claims in jurisdictions where refunds are difficult/ailing.

- Identify root causes of issues such as: who in the organization overruled standard systems tax logic and on which transactions?

- Offer supplier discounts through the prompt processing of transactions and payments.

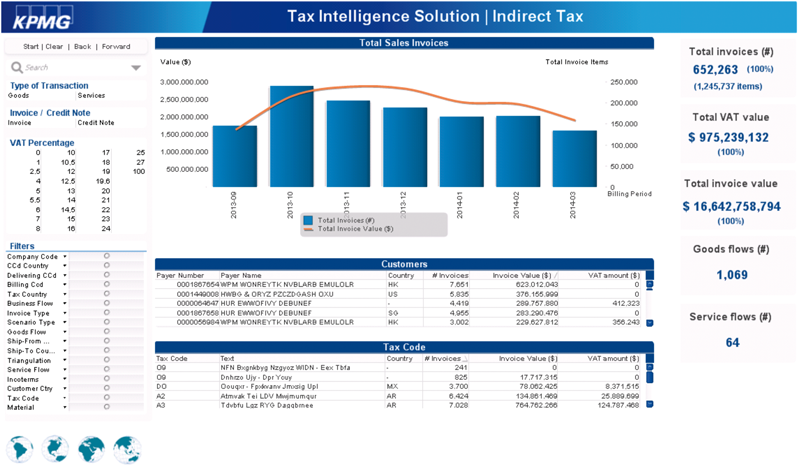

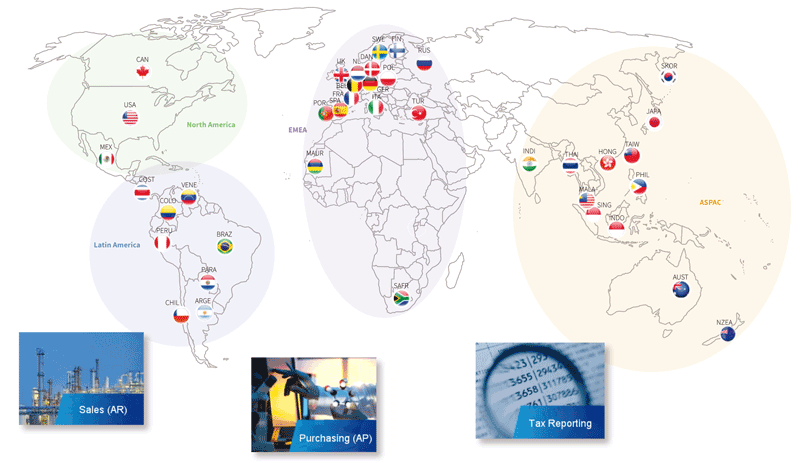

Figure 4. TIS: a global indirect tax data analytics solution.

Tax Intelligence Solution – How does it work?

Figure 5. Tax Intelligence Solution: how does it work?

Phase 1: Data extraction

TIS makes use of raw data that reside in a company’s ERP system. As tax determinant data points are scattered throughout most ERP-system data, a thorough understanding of database modeling and query techniques are necessary to derive tax analytical value from the data. In addition, basic reports in ERP systems only provide a single view on the data – e.g., accounting, logistics – which results in limited information being available to end-users to perform data analytics. TIS offers standard data extraction utilities for the most common ERP systems to automatically download all the tax-relevant raw data – accounting, logistic, master data and customizing – with minimum effort actually being required from the client’s IT resources.

Phase 2: Data cubing

After data extraction, raw data need to be staged and loaded onto a server platform for transformation purposes. Within this platform, the raw data tables will be combined to form so-called ‘tax cubes’ respecting the database relational model of the underlying ERP system. On average, 20-30 raw data tables will be used to produce a single tax cube. Besides data modeling, the enrichment of data takes place at this stage: intelligent fields are added to the data cube to enable the performance of smart analytics at a later stage in the process. Data cubes exist for different parts of the indirect tax processes (e.g., AR, AP, tax reporting) and can also be combined and stacked for cross-cube analytics.

Example

The AR VAT cube consists of a combination of accounting, invoice, delivery, order and master data – representing the end-to-end accounts-receivable VAT process. Moreover, it contains numerous intelligent fields such as goods flow (combination of ship-from information and ship-to information), VAT scenario recognition (whether the transaction is part of a triangular supply, sales of consignment goods, sales of services – to which different VAT law and legislation applies).

Phase 3: Reporting

Data cubes are loaded into a business intelligence layer providing the end-users with smart tax dashboards containing predefined VAT analytics available out-of-the-box, and helpful key facts and figures. The TIS reporting front-end contains over 80 standard VAT analytics and over 20 standard dashboards. Client-specific, customized analytics and dashboards are also possible.

Figure 6. Example of TIS dashboard: sales overview. [Click on the image for a larger image]

Analysis scoping

After agreeing a VAT data analytics project with the client, a kick-off meeting will be held with the client to gain in-depth understanding of the client’s indirect tax processes. The main goal of this meeting – besides the usual scoping of entities and the time scope to be analyzed – is a joint selection of areas that will be targeted with VAT analytics and a definition of the analytics that are fit-for-purpose to cover the project objectives.

Example

Another interesting area for VAT data analytics is in Shared Service Centers where accounts payable clerks process large volumes of invoices. The quality of tax coding by these AP clerks may give rise to potential input VAT recovery opportunities. Table 2 shows typical example analytics that are applicable in this case.

Table 2. Examples of VAT data analytics (including an example analysis objective).

Data extraction

Based on the scoping (entities, period, analytics), the data extraction scope will be determined and be executed by the client’s IT department. Data will be securely transferred from client premises to KPMG premises.

Generate client-specific TIS and perform data analysis

After receiving client data, tax data scientists will prepare an initial version of TIS – taking into account the agreed scoping of analytics. These specialists will take a first high-level glance at the TIS, performing sanity checks (reconciliations, spot checks such as revenue totals, spend totals, top customers, top suppliers) to ensure the reliability of the data for further analysis. In conjunction with indirect tax professionals, data analysis will be performed using TIS as a professional support tool. The main outcomes of this data analysis will be quantified, fact-based attention points/observations, and a sample of underlying sales/purchase invoices for validation purposes. Next, the client will pick the invoices, and indirect tax specialists will test the selected invoices against the reason behind the sampling. The conclusion can be that either the invoice is correct from a VAT point of view, or the invoice shows the test’s correctness. In the first case, the data analysis needs to be technically amended to suppress transactions with these characteristics, whereas in the latter case this implies that this transaction (and potentially other similar transactions) hold a VAT risk and/or opportunity, based on the testing hypothesis. More invoices can be requested on the basis of the results of the first invoice sample.

Hold a validation workshop and write a report

A validation workshop will be held along with the client’s finance, tax and IT professionals to discuss the initial findings of the data analysis. TIS will act as a facilitation mechanism to provide clients with key, factual insights that support the findings. The client’s input is helpful to obtain deeper understanding of the data analytics outcomes, to identify potential root-causes, and ultimately verify the correctness of the analytics performed. Based on the validated data analytics, a report will be written containing observations, recommendations and suggested next steps. Detailed outcomes of the data analytics are extracted from TIS and distributed to the client as well.

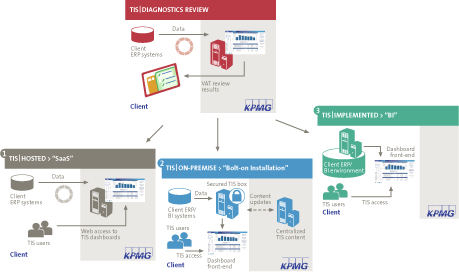

One-off versus insourced/outsourced solution

The process approach described is typical for any client who comes into contact with indirect tax data analytics for the first time. The purpose of the project, however, can be completely different, varying from a risk assessment of outgoing sales transactions to a Shared Service Center accounts payable VAT opportunity review. Next to these off-line, historical data analytics projects, clients are requesting continuous access to indirect tax data analytics solutions themselves. Figure 7 visualizes the different solutions that can be installed. A distinction can be made between insourced or outsourced solutions.

Figure 7. TIS implementation offerings. [Click on the image for a larger image]

TIS SaaS: an outsourced, cloud solution that enables clients to connect externally to the data analytics dashboards. A periodic data transfer takes places between the client and KPMG for refresh purposes. This solution is mainly of interest to medium-sized companies with limited end-users (<10) and smaller database sizes.

TIS On-premise: an insourced, on-premise data analytics solution bolt-on to the client’s ERP/BI platform that enables clients to have continuous access to data analytics dashboards. Ownership of analytics and dashboards is not transferred. Periodic maintenance, as well as analytics, dashboards and legislation, will be taken care of by KPMG. This solution is mainly of interest to large multinationals with 10 to 100 end-users, without having to maintain the solution in tax technical terms.

TIS Implemented: the same as TIS On-premise, but ownership of the analytical content, dashboards and corresponding maintenance is also transferred. This solution is mainly of interest to huge multinational companies with 100+ end-users and an IT department that has the capacity to perform maintenance themselves.

A fit-for-purpose solution will be chosen to cover client needs (from a costing perspective as well as a maintenance/governance perspective).

Typical project findings/benefits

- There were up to 4% of sales invoices where the automatically calculated tax code had been manually overwritten by accounts receivable employees.

- There were up to 3% of sales invoices where no indirect tax had been accounted for on domestic transactions where local VAT should have applied.

- There were up to 3% of purchase invoices from domestic suppliers for which no tax code had been assigned, resulting in outgoing VAT that was not recovered.

- There were up to 1% of purchase invoices where an output tax code was incorrectly assigned.

Horizontal Monitoring and data analytics

In a number of countries, the relationship between taxpayers and the tax authorities has changed. In the Netherlands, Horizontal Monitoring (in Dutch: “Horizontaal Toezicht”) was introduced about 10 years ago.

Horizontal Monitoring can be described as having a sustainable partnership with the tax authorities, based on (justifiable) mutual trust, showing transparency and respect, with codified responsibilities of both parties, with codified mutual agreements, which are respected at all times.

Horizontal Monitoring implies that the company has already implemented or will implement a Tax Control Framework. This is an internal control instrument that focuses particularly on the business’s tax processes. It forms an integral part of a company’s Business or Internal Control Framework.

In a system of Horizontal Monitoring, such as the one deployed by the tax authorities, there is scope for monitoring by the tax authorities itself, but a basic premise is that the tax authorities do not do work that has already been done by others (e.g., internal and/or external auditors). The object of monitoring is the Tax Control Framework, because it describes the business’s system for controlling tax processes.

Data analytics can be regarded as an important element of the Tax Control Framework, as the outcome demonstrates the level of quality of the tax process. It also reveals the root cause of the weak spots in the system and enables the company to take the necessary measures to ensure that mistakes will no longer occur.

Conclusion

In this article we have outlined how data analytics can be applied in the area of transactional taxes. Because organizations are becoming more globalized and transactional flows are becoming more complicated, the necessity monitoring accuracy of tax calculations via data analytics has significantly increased. In addition, the tax authorities are becoming increasingly better equipped with sophisticated tools and specialists to perform VAT audits in a highly efficient way. In order to be proactively in control from a tax perspective – for yourself and for the tax authorities – a combination of a profound set-up of the ERP system (authorizations, VAT application controls, correct tax rates) with a VAT monitoring mechanism (data analytics or the application of statistical sampling techniques) is needed to achieve this. Data analysis should never be a goal in itself but is always a means that helps measure VAT control objectives and contributes to the realization of effective processing of VAT calculations.

This can only be successfully achieved when multidisciplinary teams (tax and technology) collaborate and demonstrate willingness and capability to step into each other’s worlds and try to speak each other’s language.

References

[Bigg08] S.R.M. van den Biggelaar, S. Janssen and A.T.M. Zegers, VAT and ERP – What a CIO should know to avoid high fines, Compact 2008/2.

[Dave13] Thomas H. Davenport, Analytics 3.0: The Era Of Impact, Harvard Business Review 2013/12.